In this article, we will be discussing all the different aspects of the Commvault deduplication process, followed by features, benefits, and its usage. So let’s dive into the topic to know more about the Commvault data deduplication process and its effects.

CommVault Deduplication

Let’s understand what Deduplication is all about :

Data deduplication is nothing but a data compression process where it will identify and eliminate a duplicate set of data blocks during the data backup activity.

The data deduplication can also be called as :

- Data reduction technique

- Single instance storage

- Intelligent compressors (because it identifies the same data block element)

So how does this process actually identifies whether a particular data block is a duplicate or not?

So, according to the data deduplication process, the incoming data segments are analyzed with the stored data within a system. Based on the analysis, if the data exists, then the deduplication process will not allow the data to flow through.

|

Want to become a professional in Commvault then enrol in our “Commvault Certification Training”. This course will help you to achieve excellence in this domain. |

Let’s consider an example to understand the deduplication process better:

Assume that the document is already available in the repository. Now, the same document has some edits. So when the data deduplication process is executed, the document will be updated with the latest changes.

On contrary, if the user is trying to load the same file again to the repository, then the data deduplication algorithms will allow the document to flow and the old record of the same file will be deleted and the newer document will be saved.

Commvault Global Deduplication:

Now, as we know about the deduplication process, let’s understand more about the global deduplication method.

Within the global deduplication process, if the data is copied over to one node, and if the same data is copied over to the second node, then it will recognize that the data exists in the first node and no copy extra copy of the data is made.

Compared to the single node deduplication process, the global deduplication process is much better. Global deduplication technology is the preferred option for businesses if they are dealing with multiple data backup targets for large data centers.

The benefits associated with Global deduplication technology:

- The process is more effective when it comes to data backup activity.

- Offers load balancing techniques.

- Better utilization of storage space saves money.

- Less stored data for the organization. So, managing data backups is comparatively easy.

What is Deduplication in CommVault?

The core point of deduplication is to effectively manage database backup activity and make sure that there is no redundant data stored. To effectively implement the dedupe system, the organizations/users need to understand the capabilities, and also their limitations.

So, having these limitations in mind will definitely help the users to get the best out of the deduplication process and the data backup is done effectively.

How does deduplication work in CommVault?

The entire process is explained as a workflow:

- Generates a signature for every data block:

- Whenever a data block is read from the source, a unique signature is generated for the block with the help of a hash algorithm.

- Comparing Signatures:

- All the signatures that are generated using the hash algorithm are stored in the Deduplication database, i.e. DDB. So, bed on the signature match, the data will be stored or rejected. The signature comparison activity happens at the MediaAgent level.

MediaAgent roles:

- They are two media-agent roles available. They are:

- Data Mover Role:

This type of media agent role will have write access to disks, where the data is meant to be stored. - Deduplication Database Role:

This type of media agent role will have access to DDB and store all the data block signatures.

- Data Mover Role:

| Related Article: Frequently Asked CommVault Interview Questions |

Deduplication do’s and don'ts

In this section, we will discuss commvault deduplication best practices:

With the data deduplication process in place, a lot of disk space is saved by not allowing data duplication during the backup activity. So, to effectively utilize the benefits of the deduplication process, organizations need to understand their limitations as well.

The best practices that we can be used, i.e., Do’s for data deduplication:

- Make sure to always maintain the best dedupe ratio. Maintaining additional backups within the same dedupe system will consume less space compared to storing data on the tape.

- The data deduplication process works well with the data that is created by the human (for example, word documents, database entries, etc.). On the other hand, deduplication doesn’t work well with the data (for example photos, videos, audiotapes, etc.). So, the best way is to use the data deduplication process only with a certain type of content to avail of the benefits.

- Always compare the standards of the data deduplication process to your current project needs.

Limitations for the dedupe systems:

The best practices that can be avoided, i.e., Don'ts for data deduplication

- It is advisable not to overanalyze the deduplication ratio. Initially, the deduplication ratio is always low but grows with time. So, a regular maintenance check on the size will help you understand if there is something wrong with the process.

- As per the standard, don’t encrypt the data before the dedupe system looks into it.

- Don’t compress the data before dedupe the system. All the dedupe systems do an internal compression, so don’t waste time in terms of compressing the data upfront. Secondly, compressing the data beforehand will create issues for the dedupe systems. According to CommVault’s dedupe systems, the systems will allow the users to encrypt and provide an option to compress the backups. This being said, it will not affect your dedupe ratio.

- It is not advisable to use the multiplexing concept during the deduplication process.

Deduplication Features:

A new generation of deduplication process is implemented by Commvault. The new generation process includes a one-stop solution for all the data backup needs where you can still get flexibility and scalability.

Simpana software is the new version of the deduplication process introduced by Commvault. With this software, the following features can be utilized to streamline the data backup activities.

Critical data capture:

One of the best features that the CommVault deduplication process offers is capturing critical user data backup activity is carried out and it takes less time and bandwidth. The process also supports all kinds of devices like laptops, desktops, etc.

Tape Retention process:

With its unique process, the amount of data stored on the tapes is effectively managed. Doing this will reduce the cost associated with media and vaults.

Efficient Data center backup:

Reduces the backup time and the impact on the network when a source side deduplication process is initiated.

Offer remote office protection:

All the backup requests of remote offices can be routed to a single instance where a site to site limitation can be set for the bandwidth requirements.

CommVault Deduplication Ratio

The deduplication ratio plays an important role in terms of determining the effectiveness of the data dedupe process. The ratio of 10:1 represents that 10 times worth of data can be protected based on the actual physical space.

So, how does the deduplication ratio is calculated?

It is the total capacity of the backup data divided by the actual physical capacity that is available.

They are several factors that affect the deduplication ratio, and they are listed below.

- All data backup policies.

- The data retention settings.

- Type of data that is going through the deduplication process.

- Data encryption and compression techniques applied even before taking up the backup.

Monitoring Deduplication database:

With the help of the Deduplication database, the below areas are monitored.

- The amount of space occupied by every partition.

- The performance aspect can be closely monitored.

- The entire backup activity.

- Data related growth across all partitions.

- Can leverage on analytical/statistical information like unique secondary blocks which got written into DDB.

With the help of charts, the user will be able to see information like:

- Information about the data pruning activity.

- Any data growth partitions.

- The number of data blocks got written to the database.

- Deduplication savings are also showed, the amount of disk space is saved, i.e., free space vs. used space.

Partitions Table

- The partitions table will have the following data available for the user:

- Provide insights on the number of partitions available on this particular DDB.

- The partition status is available.

- The amount of free space that is available on the disk.

What is the procedure for monitoring?

The following monitoring procedure will help you view the data.

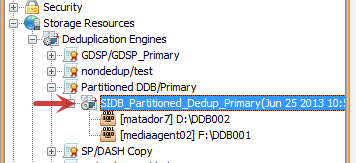

- Open up the Commcell browser: expand the Storage Resources, and then select Deduplication Engines and then go to Storage policy copy.

- The next step is to select the deduplication database

- A new summary tab opens up where you can see the data.

- Within the DDB stats chart:

- Select the chart options to see the values from the last days, or else view for a time range.

Configuring Deduplication for High performance:

The below section will cover how to configure the CommVault Deduplication process:

In this example, we will consider the Dell PowerVault DL2100 in conjunction with Commvault Simpana 8 ( Advanced deduplication edition). The advanced version of CommVault is capable of delivering end to end block-based deduplication process where the usage of MediaAgent disk storage is reduced to a considerable amount.

So, the below configuration settings will help the user to understand how to achieve higher performance by going through the deduplication process.

Let us understand the general guidelines which are common for everyone:

- Never ever place the deduplication stores on the internal system drive (i.e. C:). One has to make sure that the block level deduplication factor is set to 64k (storage policy) where the file data is backed up

- Also, one has to make sure that they need to set 128k for storage policy which is designed to backup the databases.

- Enable software compression

- Enable deduplication for all the clients and also all the storage policies.

- Make sure to apply the Spill and Fill mount path for PowerVault DL2100

- Confirm that service package 3 is installed.

To have these configuration settings enabled, let’s understand the process in detail:

Firstly, one has to create a separate primary storage policy copy, and, also, to make sure that the deduplication process is enabled by using the relative storage policy wizard.

- Right click on the storage policies from the Commcell browser

- Click on “New Storage Policy”. This will initiate a process where a new storage policy is created

- Click on “Yes” for the deduplication process in the storage policy wizard

- Check for the Magnetic Library under C: DiskStorage

Point to remember: never ever store the deduplication stores to the PowerVault DL2100 internal system (C:)

Configuring the system for block-level deduplication factor:

In this process, we will be able to set the deduplication factor at the block level on the primary storage policy copy. Also, we will have to enable deduplication.

- Open the Commcell browser > Select the appropriate storage policy> Click on Properties from the menu shortcuts

- Click on the Advanced tab on the storage policy properties, and select the desired block size within the Block Level Deduplication factor.

- Click on “OK” to save

Enabling sub-client software compression and deduplication:

- Opt for copy properties from the copy properties dialog box.

- Opt for enabling software compression with the deduplication field.

- Based on the selection, click on the “OK” button to save the changes

The following process will help you understand to enable deduplication.

- From the Commcell browser, select the sub-client where you want to enable deduplication and then click on “properties.”

- Go to the storage devices tab and opt for the deduplication tab

- Opt for On client to generate a signature

- Select the “OK” button to save.

Apply the Spill and Fill Mount Path:

The following process should be used to apply the spill and fill mount path to PowerVault DL2100 magnetic library:

- Go through the Commcell browser, right-click on the magnetic library, establish the path to the allocation policy.

- Click on “Properties”.

- Select the Mount paths tab

- Check for “The spill and fill mount paths options”

- Click “OK” to save the information

Configuring service package 3 for Simpana 8 is installed:

With the use of service package 3 within Simpana 8, the deduplication performance pack will be an added advantage to the system. This package should be installed to achieve high performance.

The following process can be used to identify whether the package is installed or not:

- Open the Commcell browser.

- Right click on Mediaagent was to update the package.

- Click on properties

- Select the version tab

- The version information should be included in Service Pack 3

- Select ok to come back to the main menu.

The Service Package 3 cannot be installed on the system with the use of an Automatic feature update on CommVault Simpana 8.

Conclusion:

In this article, we have covered all the aspects of CommVault Deduplication. With the help of this guide, we can understand the industry best practices that can be followed. With the use of Deduplication features, one can achieve the best results when it comes to data backup activity and can also streamline the process. Using the industry best practices for Deduplication, we can definitely achieve better data backup management activity.

| Explore CommVault Sample Resumes! Download & Edit, Get Noticed by Top Employers! |

On-Job Support Service

On-Job Support Service

Online Work Support for your on-job roles.

Our work-support plans provide precise options as per your project tasks. Whether you are a newbie or an experienced professional seeking assistance in completing project tasks, we are here with the following plans to meet your custom needs:

- Pay Per Hour

- Pay Per Week

- Monthly

| Name | Dates | |

|---|---|---|

| CommVault Training | Jun 09 to Jun 24 | View Details |

| CommVault Training | Jun 13 to Jun 28 | View Details |

| CommVault Training | Jun 16 to Jul 01 | View Details |

| CommVault Training | Jun 20 to Jul 05 | View Details |

Vinod M is a Big data expert writer at Mindmajix and contributes in-depth articles on various Big Data Technologies. He also has experience in writing for Docker, Hadoop, Microservices, Commvault, and few BI tools. You can be in touch with him via LinkedIn and Twitter.