- Home

- Blog

- Machine Learning

- What is Reinforcement Learning?

- TensorFlow Interview Questions

- How Oracle Embeds Machine-Learning Capabilities Into Oracle Database

- Machine Learning Applications

- Machine Learning Datasets

- Machine Learning Examples In Real World

- Machine Learning Interview Questions

- Machine Learning Techniques

- Machine Learning Tutorial

- Artificial Intelligence Vs Machine Learning

- Machine Learning with Python Tutorial

- Machine Learning with Spark

- Support Vector Machine Algorithm - Machine Learning

- Top 10 Machine Learning Algorithms

- Top 10 Machine Learning Books

- Top 10 Simple Machine Learning Projects For Beginners

- Skills Required for Machine Learning

- Keras Tutorial

- TensorFlow Object Detection

- TensorFlow Tutorial

- Installing TensorFlow

- TensorFlow 2.0 - A comprehensive platform that supports machine learning workflows

- Keras vs TensorFlow

- Machine Learning Projects and Use Cases

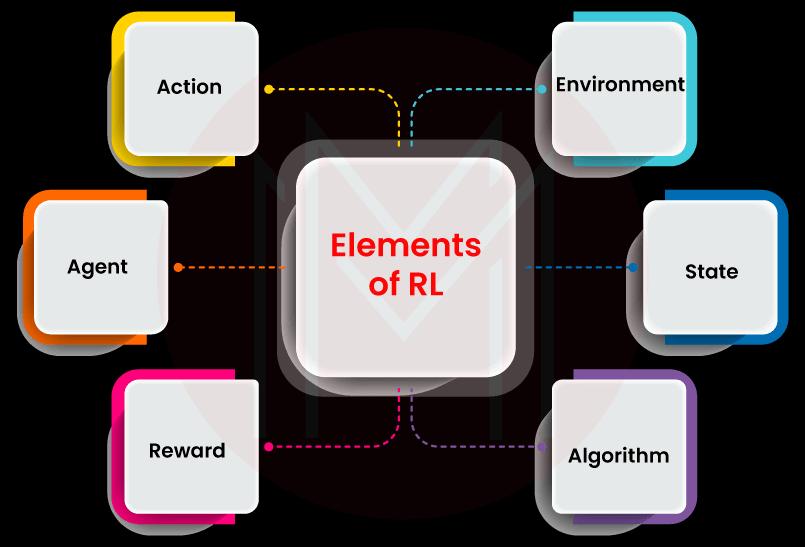

Elements of a Reinforcement Learning System

What is Reinforcement Learning?

When to use Reinforcement Learning?

How does Reinforcement Learning differ from other ML techniques?

Types of Reinforcement Learning

Examples of Reinforcement Learning

Applications of Reinforcement learning

What are the challenges of Reinforcement Learning?

Machine Learning has three subcategories: Supervised, Reinforcement, and Unsupervised Learning. Supervised learning uses labeled or sample data and desired output to train algorithms to find solutions to problems. Unsupervised learning deals with unstructured or unlabelled data and trains its algorithms to find solutions.

It is essential to note that Supervised and unsupervised learning cannot provide optimum results when the problem environment is uncertain and dynamic. However, reinforcement learning can overcome this setback since it uses the behavior or outcome of environments to train algorithms and find optimum solutions to complex problems.

The significant thing about Reinforcement Learning is that it makes intelligent decisions, allowing optimum results to solve problems in less time.

In this blog, you will learn the basic concepts of reinforcement learning, how it works, RL examples, applications, etc., in greater detail.

Elements of a Reinforcement Learning System

Before diving deep into the core concepts of RL, let’s understand the elements of RL with which you can learn the concepts quickly.

1. Agent

An agent is also referred to as an AI agent. An agent is an entity in which the RL algorithm is the driving engine. Generally, agents perform actions in an environment and subsequently change the environment's state. An agent continuously learns through the trial and error method. Every agent tries to achieve the best ‘sequences of actions’ to provide an optimal solution to the problem.

2. Learning Environment

A learning environment will usually be uncertain and dynamic. It is the place where problems exist. In addition, it is a digital platform where agents perform actions and receive responses from the environment. In a way, an environment serves as a training ground for the agents.

3. State

It refers to the present status of a learning environment. Every environment comes with many features that will change over time, constantly. When the features change because of the actions of agents, as a consequence, the state of the environment also changes.

4. RL Algorithms

Generally, RL algorithms learn themselves based on the responses of an environment in the form of rewards and punishments. Over time, RL algorithms enhance their ability to make the best sequence of decisions for complex problems.

5. Action

An action is nothing but a decision made by an agent which affects the state of an environment. An environment responds to an agent with reward or unpunishment based on the agent's actions.

6. Reward

It is the result of an action performed by an agent. In other words, it is the response of an environment to an action. A response can be both positive and negative. Note that when the response is negative, it is referred to as punishment.

| Enthusiastic about exploring the skill set of Machine Learning? Then, have a look at the "Machine Learning Training" together with additional knowledge. |

What is Reinforcement Learning?

As you know, Reinforcement Learning is the subset of Machine Learning (ML). RL mimics a human learning system that relies on the ‘trial and error method. RL algorithms learn from the ‘trial and error method like humans learn gymnastics, swimming, etc.

RL doesn’t rely on any historical data - labeled or unlabelled. It means that RL doesn't train its algorithms using sample data. Instead, RL algorithms learn from the actions performed by agents in a specific environment. That’s why RL is referred to as the behavioral machine-learning model.

Using RL, you can identify the best sequence of actions to provide optimum solutions to problems. As a result, you can optimize the decision-making process for complex environments. What’s more! You can find adaptive solutions to complex problems faster than humans do.

| Related Article: Machine Learning Tutorial |

When to use Reinforcement Learning?

You can apply Reinforcement learning if you come across the following situations.

- When you have insufficient training or sample data

- When you cannot specify a desired dataset or state

- When learning can be taken through interaction with the environment.

Why Reinforcement Learning?

There are many reasons to use RL in complex environments. Here are a few reasons.

1. Enhance Design and Product Development

With RL, you can speed up product design and development in manufacturing plants, telecommunications, oil refineries, etc. Also, it is used to make regenerative braking in electric vehicles. You can make effective designs for mines with the help of RL. Besides, you can optimize the design for environments closely associated with the parameters such as vibration, noise, and heat.

2. Simplify Complex Operations

When it comes to transportation, you can optimize routes based on the factors such as weather, traffic, and others.

When it comes to food-distributing companies, their business is tightly associated with varying shipping routes, changing demand and exchange rates, etc. RL simplifies food distribution by optimizing the conditions mentioned above. No wonder RL can control food distribution activities on an hourly basis.

With RL, you can monitor production in real-time and simulate different scenarios to derive key performance parameters. By doing so, you can improve productivity. Even you can find rare defects in products with the help of RL algorithms.

3. Improves Customer Interaction and satisfaction

With RL, companies can respond positively in a highly dynamic business environment. For instance, RL allows you to send personalized messages, offers, promotions, and recommendations to customers in a dynamic environment where changes are frequent.

How does Reinforcement Work?

As you know, trial and error is the underlying strategy of reinforcement learning. And no historical dataset or desired output is used to train RL algorithms.

In the following, let's see how agents train RL algorithms based on rewards and punishments.

- At first, agents perform an action in a complex and uncertain environment.

- Now, the environment responds to the action.

- If the environment's response is the desired behavior, then the agent is rewarded, and RL algorithms get trained based on the reward. On the contrary, if the response is undesired, no reward or punishment is given to the agent. RL algorithms get trained based on this response too.

The goal of an agent is to get maximum rewards. Typically, agents are trained with rewards and punishments. Because of this, they avoid actions that will induce undesired behaviors and perform actions that will induce desired behaviors over time. Ultimately, agents identify the sequence of actions that will help to achieve maximum rewards or optimum solutions to the problem.

Rewards and punishments are the crucial aspects that differentiate RL from other ML techniques.

How does Reinforcement Learning differ from other ML techniques?

In many ways, Reinforcement Learning is similar to supervised learning.

The following table will brief you on how RL differs from supervised and Unsupervised Learning.

| Features | Supervised Learning | Unsupervised Learning | Reinforcement Learning |

| Dataset | Algorithms train labeled datasets with desired outputs | Algorithms train unstructured and unlabelled data | Algorithms to train the synthetic data created when agents interact with environments. |

| Output | Predict target variables by processing labeled data | Classify groups from the unstructured data with similar behaviors | Identify the sequence of actions that provide an optimum solution to problems |

| Function | Works based on the desired outputs | Works based on the data | Self-directed and works in its own way. |

| Use-cases | Can be used for Object Recognition in images | Used for Anomaly Detection and Cluster Analysis | Best for interactive systems. It can be used in Gaming applications, Mining, Product design, etc. |

| Adaptability | Retraining of algorithms is required if underlying environment conditions change. | Retraining of algorithms is required if underlying environment conditions change. |

RL algorithms can adapt to changes on their own. |

Types of Reinforcement Learning

Know that there are two types of reinforcement learning: Positive RL and Negative RL.

Let’s look at the types below:

Positive Reinforcement Learning

In this learning, AI agents perform positive actions in the environment that will result in positive consequences. The action or event increases the strength of the environmental behavior. In other words, it positively impacts the behavior of environments.

Negative Reinforcement Learning

In this type, AI agents avoid performing negative actions altogether. They increase their strength and impact an environment's behavior positively. In other words, removing negative actions prevents negative behavior in the environment.

Examples of Reinforcement Learning

Do you still need help understanding the nuances of Reinforcement Learning?

No worries! The following real-life examples will help you to understand better.

1. Scoring Bonus Credits

Consider that a teacher awards bonus credits if a student crosses a particular benchmark in test scores. Because of this, the student tries to go beyond the benchmark in every test to get bonus credits. The student would also try to acquire maximum bonus credits by performing better on all tests. The maximum credits will help the student to pass the subject quickly. It is similar to agents trying to get maximum rewards to find an optimum solution to a problem. In this example, the student is the agent, bonus credit is the reward, scoring is the action, and the ‘studies and tests’ is the environment.

2. Chess Game

Next, consider a chess game. If a move made in the chessboard by a player helps him/her to win the game, then that move is rewarded. On the contrary, if a move made by the player tends him/her to lose the game, then that move is punished. So, the player tries to make every move on the chessboard to achieve more rewards. Eventually, it will help the player to win the game. In this example, the chess game is the environment, the player is the agent, and chess moves are the actions.

Applications of Reinforcement Learning

It's no wonder that reinforcement learning is increasingly being used in many sectors. This is because rewards and punishments effectively train RL algorithms in an uncertain and dynamic environment than other ML techniques.

Let’s discuss the applications of RL below in detail one by one.

Automotive

Self-driving cars use RL algorithms since RL algorithms don’t function based on predictions and ‘if-then’ instructions. The AI agent that operates self-driving cars learns only from rewards and punishments. When the car responds with the desired behavior, the agent receives rewards. It means that the car moves in the right direction. On the other hand, when the car responds with undesired behavior, the agent receives no rewards. It means that the car moves in the wrong direction. So, RL algorithms ensure that the car moves in the right direction. As a whole, you can build a self-driving car with reduced pollution, less ride time, and increased safety by obeying road rules.

Finance

By using supervised learning, you can predict future stock prices in stock trading. But, supervised learning doesn’t recommend decisions on whether to buy/sell/hold a stock or not. But at the same time, RL algorithms offer solutions to buy/sell/hold stock by evaluating the benchmark standards and tracking the performance of stock trades.

Production

With RL algorithms, you can optimize scheduling and resource allocation boosting productivity. You and reduce waste and costs by optimizing warehouse logistics. RL algorithms help to implement predictive maintenance. In return, it helps to prevent machine failures and unplanned outages effortlessly. Also, you can optimize inbound and outbound delivery networks. Therefore, you can minimize shipping delays and costs significantly.

Retail

RL algorithms help to optimize routing, logistics, and warehouse operations. So, they support constantly keeping stocks and reducing costs. They help prevent out-stocks and waste through ‘advanced inventory modeling’ and supply chain planning. RL algorithms help to personalize offers, promotions, and product recommendations to customers. So, you can improve customer satisfaction as well as sales.

Communications

Using RL, you can optimize the network layout to increase signal coverage and reduce power consumption. You can also maximize quality and minimize downtime by real-time managing networks. Besides, you can increase cross-sell and upsell revenue significantly.

Pharmaceuticals

With RL, you can simplify the drug discovery process. As a result, you can reduce the time and cost of research remarkably. RL allows you to introduce new therapies to the market quickly.

Resource Management

RL algorithms help optimize allocating resources to different tasks. Hence, you can minimize time and maximize the use of resources.

| Related Article: Machine Learning Applications |

What are the Challenges of Reinforcement Learning?

Reinforcement learning has a few challenges too. Let’s take a look at them below:

- The performance of RL hugely depends on the environment where the problem exists. This is because some environments will constantly be changing. So, finding the correct behavior can always take work.

- Training agents is a complex task. It requires plenty of computing resources. Also, computing time is usually long - up to thousands of hours.

What are the Benefits of Reinforcement Learning?

There are a lot of merits offered by RL. Below is the list of the same.

- You can use RL for scalable applications

- It is used to recommend products where customer preferences and behaviors change frequently

- It helps to forecast dynamic conditions accurately

- It quickly solves complex logistics problems

- It speeds up clinical trials

- It helps to make an impact analysis of economic policies on consumers. Not just that, health policies on patients.

Future of Reinforcement Learning

As RL imitates human behavior, mainly through trial and error, it has a broad scope in many sectors. In addition, RL algorithms don't need sample data for training, which also reduces data processing time and costs. Although it is one of the still developing techniques, researchers and scientists are continuing to explore more benefits from this ML technique. RL will undoubtedly grow with more applications and use cases shortly.

Conclusion

We hope that you must have got a good idea about reinforcement learning after reading this blog. Remember, the right actions of agents result in desired behavior of the environment offering rewards to the agents. This process induces the agents to do more right actions to maximize rewards and reach an optimum solution in less time. Reinforcement Learning is incredibly beneficial when the environment is an interactive system. Above all, no sample data or desired output is required to train RL algorithms. It is one of the ML techniques that has a lot of prospects in the future.

On-Job Support Service

On-Job Support Service

Online Work Support for your on-job roles.

Our work-support plans provide precise options as per your project tasks. Whether you are a newbie or an experienced professional seeking assistance in completing project tasks, we are here with the following plans to meet your custom needs:

- Pay Per Hour

- Pay Per Week

- Monthly

| Name | Dates | |

|---|---|---|

| Machine Learning Training | May 23 to Jun 07 | View Details |

| Machine Learning Training | May 26 to Jun 10 | View Details |

| Machine Learning Training | May 30 to Jun 14 | View Details |

| Machine Learning Training | Jun 02 to Jun 17 | View Details |

Madhuri is a Senior Content Creator at MindMajix. She has written about a range of different topics on various technologies, which include, Splunk, Tensorflow, Selenium, and CEH. She spends most of her time researching on technology, and startups. Connect with her via LinkedIn and Twitter .