- Comparing Application Servers: Jboss and Tomcat

- JBoss Interview Questions

- JBoss Tutorial

- JBOSS Admin Console

- Adding a JMS destination

- Adding JSF components

- Advanced deployment strategies

- Clustering entities - JBoss

- Configuring a JBoss AS Domain

- Configuring JBoss Core Subsystems

- Configuring Data Persistence - JBoss

- Configuring Enterprise Java Beans - JBoss

- Configuring Enterprise Services

- Configuring Session Cache Containers in JBOSS

- Configuring the Application Server

- Configuring the application server logging

- Configuring the domain controller

- Configuring the domain.xml file

- Configuring the EJB components

- Configuring The HTTP Connector

- Create an OpenShift Express domain - JBoss

- Creating and deploying a web application in JBoss

- Deploying applications on a JBoss AS domain

- Deploying Applications on JBoss AS 7

- Deploying applications on JBoss AS standalone

- Deploying the web application - JBoss

- JBOSS Dynamic Metrics

- Executing scripts in a file - JBoss

- Installing the JDBC driver

- JBoss AS 7 classloading explained

- JBoss Web Server Configuration in JBoss

- JBoss Load Balancing Web Applications

- Load-balancing with mod_cluster

- Management interfaces - JBoss

- Managing Mod Cluster With CLI In JBoss

- Securing Web Applications In JBoss

- JBoss SetUp - Cluster Of Domain Servers

- Cloud Computing Vs Grid Computing

- JBoss Testing Mod Cluster

- JDBC Interview Questions

JBoss Clustering is an advancement in technology that makes JBoss Application Server, a perfect enterprise-class application server. With its unique features like fail-over, distributed deployment, and load-balancing, JBoss Clustering is used to develop hefty, robust, and scalable applications.

JBoss Clustering allows various applications to run on multiple parallel servers commonly known as cluster nodes at the same time as offering application clients with a single view. The load is equally shared on different servers so that if any of the servers fails, the application continues with the help of remaining active cluster nodes.

JBoss Clustering is important to build scalable applications in enterprises as it provides the advantage to enhance the performance simply by adding extra nodes to the server. Clustering is also crucial in enterprise applications because of its infrastructure that increases redundancy and hence the availability of servers.

JBoss Cluster

Cluster Definition:

A cluster, in general, is a set of nodes that interact with each other to reach a common goal. In a JBoss Application Server, a cluster which is also known as a “partition”, is a JBoss Application Server instance. Interaction between the various nodes is handled by a library known as JGroups group communication library, in which JGroups Channel tracks the core functionality of clusters and is responsible for setting up a reliable communication between various clusters.

HAPartition:

HAPartition is a service generally used for handling multiple tasks in application server clustering. The core functionality of this service is to build a level of abstraction on top of the JGroups channel that is used to exchange messages among cluster members. HAPartition is also responsible for setting up a registry to store which cluster members are a group of which clustering services.

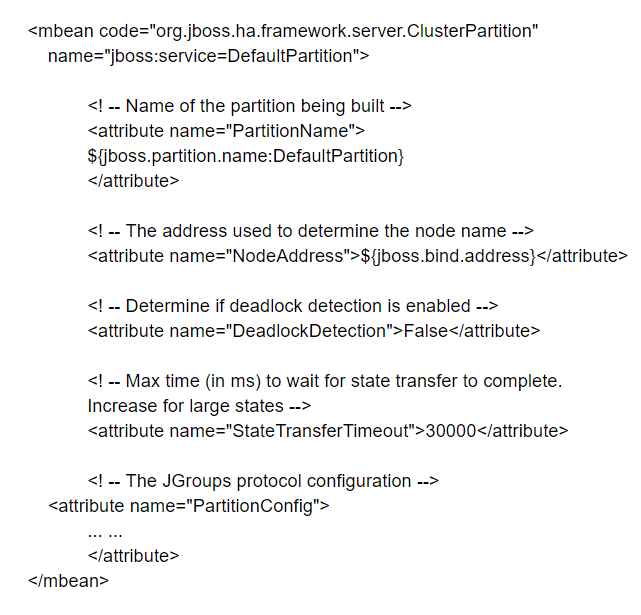

The example below shows the HAPartition MBean packaged with the regular JBoss AS distribution.

Setting up JBoss clustering

Channels in Jgroups having the same name and configuration can find each other to form a group. Executing a simple command “run -c all” on two application server instances in the same network can form a cluster. There is a scope to dynamically add or remove nodes from a cluster at any point in time just by initiating a channel with the same configuration and name that is identical to other cluster members.

In the same instance, various services can make their Channels. By default, four different services that form channels are the web session replication service, the EJB3 entity caching service, the EJB3 SFSB replication service, and a core general-purpose clustering service also called HAPartition. These channels are identified by their unique name. The configuration should match with its peers but should differ from the other channels.

The image below shows an example of JBoss server instances. The topology shows the configuration of AS instances.

Broadcast Groups

A broadcast group is a channel through which a server broadcast connectors in a network. A connector is used to set up a connection between servers.

The broadcast group comprises connector pairs in a set, each pair of connectors has connection settings for the backup server (live or optional) and broadcasts them on the server network. The group also dictates the port settings and the UDP address.

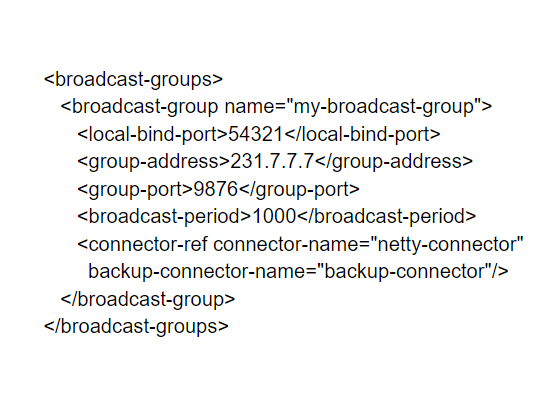

Here is an example of a broadcast group:

Discovery Groups

While the broadcast group is responsible for defining which connector information is broadcasted from a server, a discovery group is responsible for defining how connector information is being received at multiple addresses. A discovery group comprises a list of connector pairs for each broadcast sent by different servers. As this group receives broadcasts on the multicast group address from any server it updates the list with the entry for that server.

If the group doesn’t receive a broadcast from any server for a longer duration, it removes the entry of that server from its list.

JBoss Cache channels:

JBoss Cache is a distributed cache framework that can be used either as a standalone application or it can also be used as an application server environment. The application server in JBoss incorporates JBoss Cache for various services such as HTTP sessions, EJB 3.0 entity beans, and EJB 3.0 session beans. Each service is kept in a separate Mbean, and each cache possesses a JGroups Channel of its own.

- Service Architectures

The cluster topography is of great use for system administrators but for developers, cluster architecture from a client’s viewpoint matters the most. The most common clustering architectures in use by JBoss are client-side interceptors also known as stubs or smart proxies and external load balancers.

- Load-Balancing Policies

Both the architectures of JBoss AS uses load balancing policies to establish which server node should send a connection to which another node in a network.

- Farming Deployment

Farming service is used to deploy a JBoss application into the cluster. It basically does a job of deploying an application archive file such as EAR, SAR, or WAR file in the all/farm/ directory of any cluster members so that the application which is deployed can automatically get duplicated across all other nodes in that particular cluster.

- Distributed state replication services

This is the most important service in clustering architecture. It provides distributed state management in a cluster server environment. For eg, the session state should be in sync with other nodes in a stateful session bean application as the client application must reach the same session state irrespective of the node that is serving the request.

Clustered JNDI Services:

Clustered JNDI services are one of the crucial services provided by the JBoss application server. There are several features being served by HA-JNDI (High Availability JNDI):

Transparent failover of naming operations – If one HA-JNDI service fails or shuts down which is connected to a particular JBoss then the HA-JNDI client can transparently failover to another application server instance.

Load balancing of naming operations - An HA-JNDI has the tendency to automatically load balance its service requests to all the HA-JNDI servers in the cluster.

A unified view of JNDI trees across all the clusters - There is liberty with clients to connect with HA-JNDI service executing on any node in the cluster and can discover objects bound in JNDI services on any other node. There are two mechanisms to perform this task

Cross-cluster lookups – Once the client performs the lookup function, the HA-JNDI service located at the server side has the capability to discover things bound in regular JNDI service on any other node in the cluster.

A replicated cluster-wide context tree – The HA-JNDI service bound object will be replicated around the cluster, and a replica of that object can be seen in-VM present on each node in the cluster.

Visit here to learn JBoss Online Certification in Hyderabad

How does it work?

The client-side HA-JNDI naming Context in JBoss is based on the client-side interceptor architecture. The client gets the HA-JNDI proxy object from the InitialContext object and calls upon the JNDI lookup services through the proxy server on the remote server. The client requests an HA-JNDI proxy by configuring the naming properties that were used by the InitialContext object. This information that the naming context is making use of HA-JNDI remains transparent to the client.

HA-JNDI service maintains a cluster-wide context tree on the server-side. Even if there is only one node available in the cluster, the cluster-wide tree is available to take up the service request. Every node in the cluster also keeps a list of its own local JNDI context tree. The HA-JNDI service on that node can discover objects bound into the local JNDI context tree.

Client configuration

Clients operating inside the application server

To access HA-JNDI present inside the application server, get an InitialContext from JNDI properties.

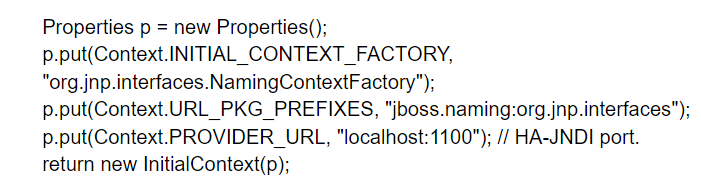

The example below shows the method to create a naming Context bound to HA-JNDI:

Clients operating outside the application server

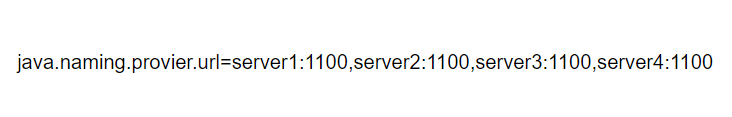

The JNDI client has to have a list of all HA-JNDI clusters. A list of JNDI servers can be released to the java.naming.provider.url JNDI setting in the jndi.properties file. Each server node is recognized by its IP address and the JNDI port number separated by commas.

Clustered Session EJBs:

Session EJBs provide remote invocation services and they are clustered based on the client-side interceptor architecture. The client application for clustered session EJBs is similar to a non clustered version with a minor difference to the java.naming.provier.URL system property to activate the HA-JNDI lookup.

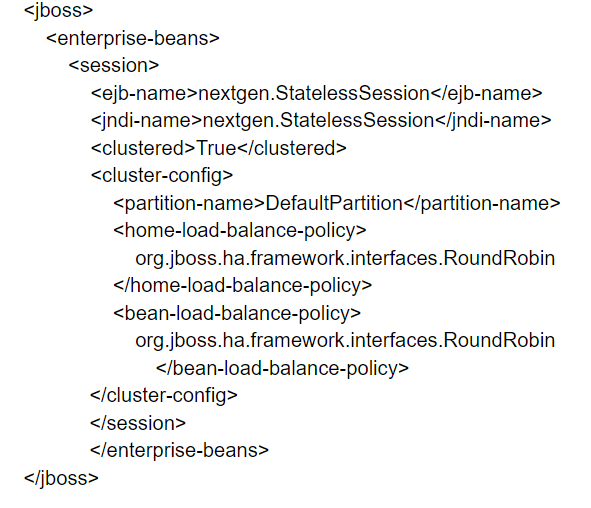

Stateless Session Bean in EJB 2.x

In the stateless session, EJBs, receiving calls can be load-balanced on any participating node in a cluster. Add a <clustered> tag to make a bean clustered, by modifying its jboss.xml descriptor.

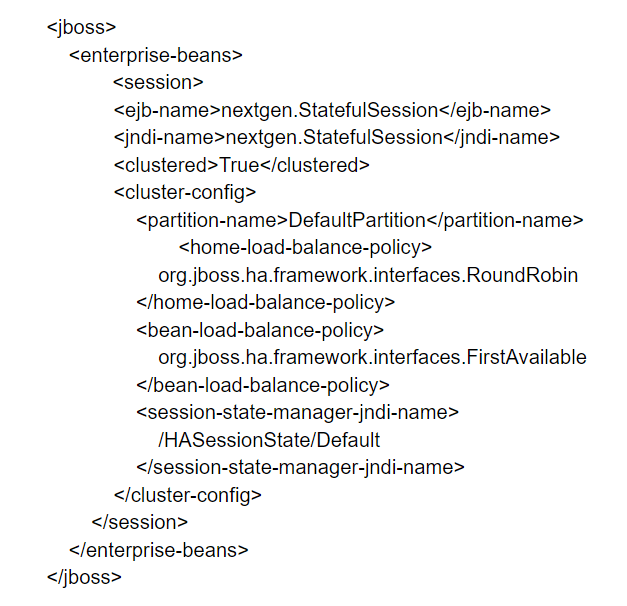

Stateful Session Bean in EJB 2.x

This is more complex as compared to stateless EJBs. The state replicates and synchronizes to all other clusters if the state of the bean changes.

In an application, the jboss.xml descriptor file needs to be modified for each stateful session bean, and the <clustered> tag is added.

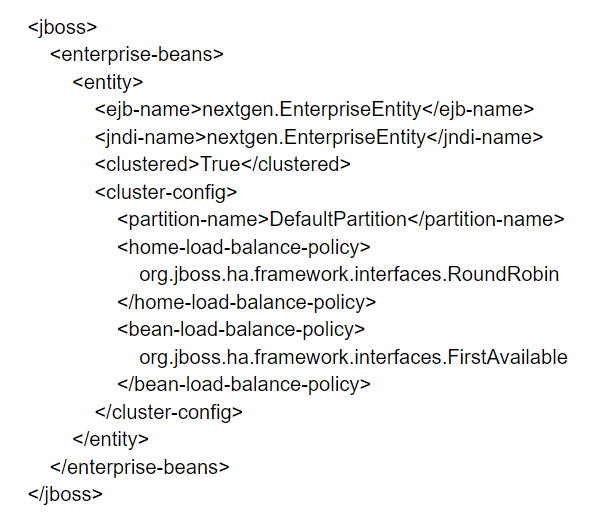

Clustered Entity EJBs:

The clustered entity bean does not differ significantly from its counterparts except for looking up the references for EJB 2.x remote bean from the clustered HA-JNDI.

Entity Bean in EJB 2.x

The entity bean in EJB 2.x should only be used in a special case when the data is read-only, or one read-write node with read-only nodes synchronized with the cache invalidation services.

An example is shown below:

Entity Bean in EJB 3.0

Entity Bean EJB 3.0, mainly used as a persistence data model and cannot be used for remote services. Only deal with replication and distributed caching and do not support load balancing.

Clustered JMS Services:

Clustered JMS services are executed the same as a clustered singleton fail-over service.

- High Availability Singleton Fail-over

The JBoss HA-JMS service uses only a master node in a cluster at a time. The cluster starts using another node to run clustered JMS service in case a master node fails and all supporting services along with the available information gets deployed on that new server. This framework reduces redundancy but does not impact the work-load on the JMS server node.

- Server-Side Configuration

The difference between HA-JMS found in all configurations and the non-H-JMS version found in the default configuration is where most of the configuration files are located. In HA-JMS, mostly these files are located in deploy-singleton/JMS directory, and not in deploy/JMS.

- Non-MDB HA-JMS Clients

The client in HA-JMS differs from regular clients in these two aspects.

The client in HA-JMS client needs to look up for JMS connection factories along with queues and topics by using HA-JNDI services

In case the client is a J2EE component such as a session bean or any web application executing inside the AS, the lookup can be configured with the use of the component's deployment descriptors

- Load Balanced HA-JMS MDBs

HA-JMS MDBs can send and receive messages from multiple nodes from the master node, unlike HA-JMS that runs only on a single node.

JBossCache and JGroups Services:

- JGroups Configuration

The JGroups setup offers services to enable peer-to-peer interactions between various nodes in a cluster. It is built on top of the stack that provides transport, reliability, discovery, failure detection, and cluster management services

- Common Configuration Properties

down_thread to create an internal queue and a queue processing thread or down_thread for messages that are received from higher layers.

up_thread is almost similar with only one difference that messages are received from lower layers in the protocol stack instead of higher layers.

- Transport Protocols

The transport protocols basically send messages either from one cluster node to another which is Known as unicast or from one cluster node to all other nodes in the cluster known as multicast. UDP, TUNNEL, and TCP are transport protocols.

- UDP configuration

UDP makes use of multicast to communicate messages. UDP needs configuration in the UDP sub-element in the JGroups Config element for its functions.

- TCP configuration

TCP is a unicast protocol that generates more traffic when the size increases. To use TCP, the TCP element needs to be defined in the JGroups Config element.

- TUNNEL configuration

The TUNNEL protocol makes use of an external router to send messages. This external router is most commonly known as a GossipRouter.

Conclusion:

JBoss Clustering is a boom for JBoss Application Server as it makes it a true enterprise-class application server. With its unique features like fail-over, distributed deployment features, and load balancing, JBoss Clustering aims to provide large, robust, and scalable applications. It offers full clustering support for J2EE applications as well as EJB 3.0 POJO applications.

On-Job Support Service

On-Job Support Service

Online Work Support for your on-job roles.

Our work-support plans provide precise options as per your project tasks. Whether you are a newbie or an experienced professional seeking assistance in completing project tasks, we are here with the following plans to meet your custom needs:

- Pay Per Hour

- Pay Per Week

- Monthly

| Name | Dates | |

|---|---|---|

| JBoss Training | Apr 25 to May 10 | View Details |

| JBoss Training | Apr 28 to May 13 | View Details |

| JBoss Training | May 02 to May 17 | View Details |

| JBoss Training | May 05 to May 20 | View Details |

Ravindra Savaram is a Technical Lead at Mindmajix.com. His passion lies in writing articles on the most popular IT platforms including Machine learning, DevOps, Data Science, Artificial Intelligence, RPA, Deep Learning, and so on. You can stay up to date on all these technologies by following him on LinkedIn and Twitter.