- Home

- Blog

- Kubernetes

- Kubernetes Tutorial

- Kubernetes Architecture

- Kubernetes Deployment Vs Kubernetes Services

- Kubernetes Ingress

- Kubernetes Interview Questions

- Kubernetes Load Balancer Services

- Kubernetes Vs Docker swarm

- Kubernetes Vs Openshift

- Kubernetes Overview

- Detailed Study On Kubernetes Dashboard

- What is Kubernetes?

- Cloud Deployment Models

- Fluentd Kubernetes

- Kubernetes Tools

- Kubernetes Projects and Use Cases

- How To Install Kubernetes on Ubuntu?

- Installing Kubeadm

- Kubernetes Secrets

- Kubernetes Storage Class

- Kubernetes Deployment YAML

- Install Kubernetes on Windows

- Kubernetes VS Openstack

- Kubectl Connect to Cluster

- Kubernetes Vs Terraform

- ECS Vs Kubernetes

- Kubernetes Pod Vs Node

This Kubernetes tutorial gives you an overview and talks about the fundamentals & Kubernetes Tutorial.

Kubernetes is ‘an open-source composition engine that is specifically designed for automating deployment, scaling, and management of containerized applications.’ It is flexible for containers of any size and scale and is supported by a tool to group continues into logical units and to track, manage and monitor all of them. Cabinets help you do that and are considered the absolute tool for container management.

Kubernetes Tutorial for Beginners

In this Kubernetes Tutorial, we will go through the below topics

- What is Kubernetes?

- Kubernetes Features

- Kubernetes Architecture

- Components of Kubernetes Node

- Docker Engine Installation

- Deploying an Application using Kubernetes

Getting started with Kubernetes

What is Kubernetes?

Kubernetes is basically a system designed specifically to manage containerized applications of distinct kinds across a cluster of nodes. It was designed to address the disconnect between the way in which the modern, clustered infrastructure is designed. Almost all cluster technologies strive hard to provide a platform that's distinctive or application deployment.

The user should not have to care about where scheduling of the work. The unit of the work presented to the user is at the service level and might be accomplished by any of the member nodes. On the flip side, many applications built with scaling in mind are literally created up services of the smaller element, that should be regular on the constant host. it's even a lot necessary once they trust specific networking conditions so as to communicate appropriately.

Consider Applications rather than Servers

Kubernetes, with its elegant abstractions, permits developers to accept applications rather than servers of individual containers on specific Servers, pet servers, hostnames, etc. Pods, replication services, and controllers are the basic units of Kubernetes and are used to describe the system’s desired state. In Kubernetes, the deployment is handled based on the rules and moves towards a forward step further by proactively monitoring, scaling, and auto-healing these services to maintain their desired state.

|

If you want to Enrich your career with a Kubernetes certified professional, then visit Mindmajix - A Global online training platform: “Kubernetes Certification Course”. This course will help you to achieve excellence in this domain. |

Kubernetes Features

Kubernetes helps users to quickly and efficiently respond to the demands of their customers with the following features as follows:

- Helps to deploy any applications quickly and predictably.

- Roll out new features seamlessly and Scale your applications on the fly

- Limit the usage of hardware to required resources only.

- Helps to relieve the burden of running applications in public and private clouds.

This tutorial provides a walkthrough of the basics of the Kubernetes cluster composition system. Each module consists of some background information on major features of Kubernetes and concepts including an interactive online tutorial. This tutorial lets the reader manage a simple cluster and its containerized applications.

Using these interactive Kubernetes Tutorial, you can learn how to:

- Deploy an application that is containerized on a cluster

- Debug the containerized application and Scale

- Update the containerized application using a new software version.

| Related Article: Overview of Kubernetes |

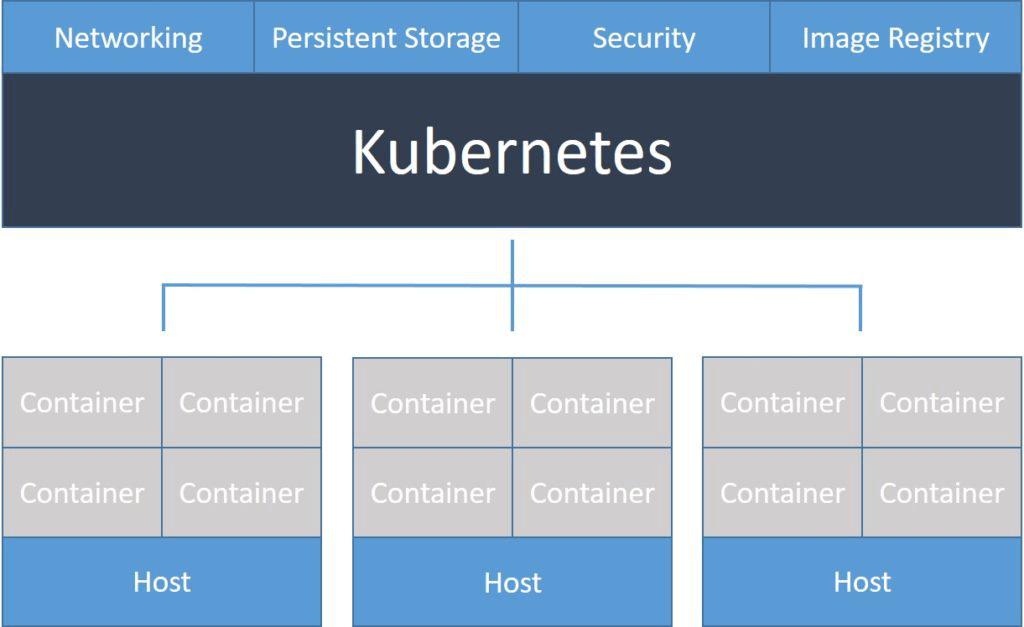

Kubernetes Architecture

H3 Master Components

The systems like CoreOS, which are at the infrastructure level strive hard to create an environment that is uniform where each host is interchangeable and disposable. On the other side, Kubernetes operates with a particular level of host specialization.

Kubernetes cluster controlling services are known as the master or control plane components. These operate according to the primary management contact point for administrators and also provide several cluster-wide systems for the relatively dumb worker nodes, which can be installed on a single machine or distributed across multiple ones.

The cluster architecture follows client-server architecture with a master installed on one machine and the nodes on separate Linux machines.

Master Components of Kubernetes Master Machine.

The key components of Kubernetes are as follows:

Etcd

It stores the information related to a configuration that can be used by the cluster nodes. It is with high availability key-value store distributed among multiple nodes and with a distributed key-value store can accessible only by Kubernetes API server as it may be of some sensitive information.

API Server

Kubernetes, an API server implements all the operations on the cluster using distinct tools and libraries which can readily communicate. kubeconfig is used to expose Kubernetes API, comes with server-side tools, and can be used for communication.

Controller Manager

The function of this component is to regulate the state of the clusters and perform a task and is mostly for collectors. It runs in a non-terminating loop and is responsible for sending and collecting information to the API server. To bring the server to the desired state, it collects shared data and makes changes. Its key controllers include replication controller, namespace controller, service account controller, and endpoint controller. The controller manager runs distinct kinds of controllers to handle nodes, endpoints, etc.

Scheduler

Being one of the key components of the Kubernetes master, it is responsible for workload distribution and for tracking work utilization load on cluster nodes and then placing them on the available resources by accepting the workload. In short, it is responsible for allocating pods to available nodes and is responsible for workload utilization.

| For More Info: Kubernetes Architecture |

Components of Kubernetes Node

Following are the key components of the Node server that are necessary to communicate with the Kubernetes master.

1. Docker - Docker being the primary requirement of any node helps in running any encapsulated application containers and lightweight operating environment.

2. Kubelet Service - The service in each node is responsible for relaying information both to and from the control plane by interacting with etc store to read the values of configuration and wright. It assumes the responsibility of maintaining the work state and the node, also manages network rules, port forwarding, etc.

3. Kubernetes Proxy Service - This is a proxy service that runs on nodes and helps in making services that are available to an external host. It is responsible for forwarding requests to correct containers and performs primitive load balancing and ensures that the networking environment is accessible, predictable as well as isolated. Any key function of this is to manage secrets, pods on node, volumes, new container creation, etc.

4. Setting Kubernetes - It is necessary to set up a virtual Data Center(VDC) for setting up Kubernetes, which can be considered as a set of machines responsible for communicating with each other via the network.

Once the IaaS setup on any cloud is complete, you need to configure the Master and the Node.

After setting up the IaaS on any cloud, configuring the Master and the Node should be done.

Prerequisites

Docker Installation − Docker is necessary for every Kubernetes installation. Steps to install the Docker are as follows:

Step 1 − Log on to the machine with the login credentials of the root user.

Step 2 − Using an apt package, update the package information

Step 3 − Run the following commands. Step 4 − Add the new GPG key.

Step 5 − Update the API package image.

$ sudo apt-get updateAfter completing the above tasks, start with the actual Docker engine installation by verifying the kernel version

Docker Engine Installation

Run the following commands to install the Docker engine.

Step 1 − Login to the machine.

Step 2 − Index package updating.

$ sudo apt-get updateStep 3 − Using the following command, update the information.

$ sudo apt-get install docker-engineStep 4 − Start the Docker daemon.

$ sudo apt-get install docker-engineStep 5 − Using the below command, verify the installation of the Docker engine.

$ sudo docker run hello-worldInstall etcd 2.0

Run the commands following in or to install Kubernetes Master Machine.

In the above set of commands −

After downloading the etcd, Save it with a specified name and then un-tar the tar package.

Make a dir. Within the /opt named bin and then copy the extracted file to the targeted location.

Now, we can build Kubernetes by installing it on all the machines on the cluster.

The above command will create an _output dir in the root of the Kubernetes folder. Now, we have to extract the directory into any of the directories of our choice /opt/bin, etc.

The networking part is the next coming one needs to step up with the Kubernetes master and node setup. To make this, making an entry in the host file has to be done on the node machine.

Following will be the output of the above command.

Output

Now, the actual configuration starts on Kubernetes Master.

Start with copying all the configuration files to their correct location.

The above command will copy all the configuration files to the required location. Now we will come back to the same directory where we have built the Kubernetes folder.

The next step is to update the copied configuration file under /etc. dir.

Configure etcd on the master using the following command.

$ ETCD_OPTS = "-listen-client-urls = http://kube-master:4001"| Related Article:- Top Kubernetes Interview Questions |

Deploying an Application using Kubernetes

To deploy an application in Kubernetes, Kubeapps is the easiest and quick way. It is the Kubernetes Dashboard that supercharges cluster with simple browse and app deployment in any format. It also provides a complete application delivery environment to empower users to launch, review and share applications. Kubeapps is an open-source project designed to encourage to check out the latest version. It can be deployed in the cluster in minutes.

Project in the Kubeapps includes the following:

CLI

This is mostly used to supercharge the cluster and bootstrap kubeapps to run Kubeapps CLI tool in the terminal window. The complete application delivery environment can be installed with a single command.

Dashboard

For Simplified deployment, Kubeapps provides an in-cluster toolset of over 100 Kubernetes ready applications that are packaged as Helm charts and kubeless functions.

Hub

This is a web-based community designed to discover, rate, and review pre-packaged Kubernetes applications, which are accessible with the Kubernetes cluster.

Why Kubernetes?

Kubernetes at a minimum can Schedule and run utility packing containers on clusters of each bodily and digital machine. but, it additionally permits builders to ‘cut the cord’ to bodily and digital machines, transferring from a number-centric infrastructure to a field-centric one providing several advantages that inherit to containers. It provides the infrastructure required to build a complete container-centric development environment. Kubernetes comes with an underlying technology to Docker, that has already been baked into the Linux Kernel for some time.

Kubernetes allows users to deploy cloud-native applications and manage them exactly according to their requirements anywhere and at every point of time. Its key features include:

- An Infrastructure framework for today

- Modularity for better Management

- Updating and Deploying software for Scale

- Laying a strong foundation for Cloud-native apps

In addition to these, Kubernetes allows users to derive maximum container utility and build cloud-native applications thus enable to run independently of cloud-specific requirements anywhere. Clearly, it is the most effective model for application development and operations in a quick and easy way.

On-Job Support Service

On-Job Support Service

Online Work Support for your on-job roles.

Our work-support plans provide precise options as per your project tasks. Whether you are a newbie or an experienced professional seeking assistance in completing project tasks, we are here with the following plans to meet your custom needs:

- Pay Per Hour

- Pay Per Week

- Monthly

| Name | Dates | |

|---|---|---|

| Kubernetes Training | Jun 02 to Jun 17 | View Details |

| Kubernetes Training | Jun 06 to Jun 21 | View Details |

| Kubernetes Training | Jun 09 to Jun 24 | View Details |

| Kubernetes Training | Jun 13 to Jun 28 | View Details |

Suneel, a Technology Architect with a decade of experience in various tech verticals like BPM, BAM, RPA, cybersecurity, cloud computing, cloud integration, software development, MERN Stack, and containerization (Kubernetes) apps, is dedicated to simplifying complex IT concepts in his articles with examples. Suneel's writing offers clear and engaging insights, making IT accessible to every tech enthusiast and career aspirant. His passion for technology and writing guides you with the latest innovations and technologies in his expertise. You can reach Suneel on LinkedIn and Twitter.